My Claude Code Setup for PMs and Founders

Two plugins, plan mode, and the discipline to stop configuring from a Stripe PM and ex-founder.

I wrote this in late January 2026. Since then, context windows have grown to 1M tokens, I’ve built custom MCPs, and I have far more skills than the three I describe here. A follow-up is coming. But the philosophy hasn’t changed, even as the tools have.

The Psychosis of Infinite Possibility

Andrej Karpathy said something on No Priors that stuck:

“This is why it gets to the psychosis, is that this is like infinite and everything is skill issue.”

When I first wrote this in January, I didn’t feel the psychosis. The constraint was obvious: you had a limited context window, and after a certain number of turns the agent would drift and lose the thread. The ceiling was visible. I spent my energy keeping the model on track, not dreaming about what else it could do.

Two months later, I feel it. Context windows are massive. The models hold coherence far longer. Agents can execute nearly anything I describe. Every limitation feels like my failure to direct them properly.

That’s where it becomes dangerous. Collecting skills and plugins on X is productive procrastination. You feel like you’re making progress. You’re not. You’re avoiding the harder thing: sitting down with a blank project and building something real.

I know because I did this. I already had compound-engineering installed and working. It was enough. But I spent another week hunting for something better (hence never posting this article). Classic Notion syndrome: planning the system instead of using it.

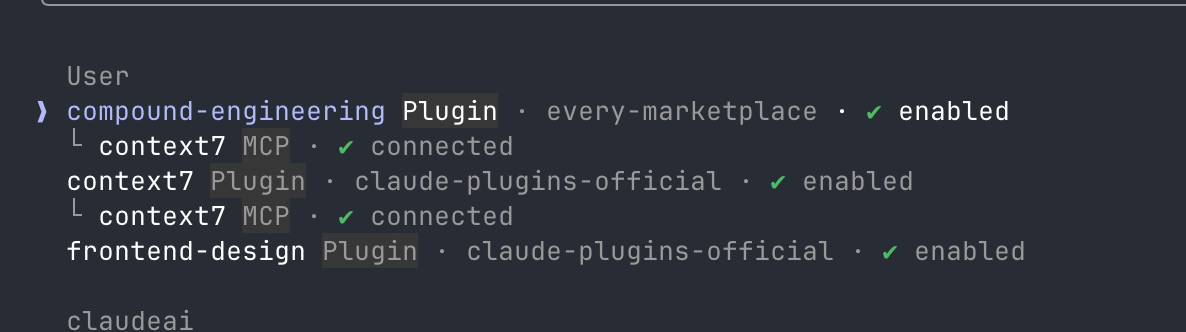

Two Plugins and Nothing Else

compound-engineering gives you specialised subagents: code reviewers, research workflows, security audits, architecture analysis. It bundles Context7 for documentation lookup. You won't use most of them. But when you need a security audit or want multiple agents reviewing a plan, they're there.

frontend-design forces Claude to think about aesthetics before code. Not just “make it work,” but “make it distinctive.”

CLAUDE.md: Less Is More

In January, I poured effort into my CLAUDE.md files. Long, detailed instructions. Guardrails, formatting rules, tone guidance, workflow descriptions. I treated them like onboarding documents for a new hire.

Now I keep them simple. A few lines of context, the key conventions, nothing else. The models are good enough that heavy direction hurts more than it helps. I end up fighting my own instructions.

Here’s the CLAUDE.md for my Obsidian vault:

# Vault

This is my personal knowledge vault in Obsidian.

- Notes live in folders by topic

- Use [[wikilinks]] for connections

- Daily notes go in /daily

## Vault Structure

00 Inbox/ Raw, unprocessed captures

20 projects/ Work execution + side projects (each may have its own CLAUDE.md)

30 Thinking/ Abstracted insights, mental models, reusable principles

40 Writing/ Drafts and long-form content for an audience

50 Life/ Personal reflection, health, travel, goals

60 People/ CRM — one note per person, tagged #person

90 Archive/ Cold storage — completed projects, legacy notes

**Abstraction rule (5-year heuristic):** If an insight is valuable regardless of where you work or who you're with in 5 years → `30 Thinking/`. If it's specific to a time/person/company → keep it in `20 projects/`, `50 Life/`, or `60 People/`.

## Key Rules

**People (`60 People/`):** Every person gets one note named `[[Full Name]]` with `#person`. Store static facts there (birthday, career, quirks). Long meeting notes live in `20 projects/Areas/1-on-1s/` and link back to the person.

**Writing (`40 Writing/`):** When writing long-form content:

1. Create `40 Writing/[Title]/` folder

2. Research goes in `Research.md`, final post in `[Title].md`

3. Always read `40 Writing/Writing Guide.md` first — source of truth for voice and style

4. Two modes: `/essay` (story-driven, metaphor-rich) and `/article` (tactical, problem-first)

5. Named sections, varied rhythm, close with `– Yubi`

6. Post-draft: optionally run `/humanizer` then `/zinsser-editor`

Short-form content (tweets, X posts) is written directly without this workflow.

**No orphans:** Every note links to at least one other note or index.And here’s one for a bigger work project - a money movement QA agent:

# FA Agent

AI QA agent for Stripe's V2 Money Management APIs. Runs end-to-end test scenarios against sandbox using MCP tools that map to the Financial Accounts API surface.

Test scenarios live in `context/test-scenarios/`.

## Conventions

- **Amounts** are in smallest currency unit (e.g., `100000` = 1,000.00 GBP)

- **API version**: `Stripe-Version: 2026-03-04.preview` (override via `FA_AGENT_API_VERSION`)

- **Sandbox only**: Test helpers (`credit_financial_address`, `generate_microdeposits`, etc.) only work in test mode.

## Test Reasoning Format

Every test step must capture reasoning, not just pass/fail:

- **Intent**: What and why (e.g., "Creating a GBP FA because the next step needs to fund in GBP")

- **Approach**: Specific action taken

- **Expectation**: What should happen

- **Outcome**: What actually happened

- **Learning**: What was surprising or wrong (omit if straightforward)

...etcShort. Contextual. Enough for the model to orient, not so much that it re-reads a manual every turn. Trust the model more, micromanage less.

The Day-to-Day

I open a terminal. I run claude. By default, Claude asks permission for every action — git commits, file writes, web searches. I approve each one. Yes, yes, yes.

That’s fine for exploring. But when I know what I want, it’s friction.

So I created an alias:

alias claude="claude --dangerously-skip-permissions"Now I have two speeds. Plan mode when I need to think. Default mode when I need to ship.

One setting I can't live without: the status line at the bottom of my terminal showing context usage percentage. And the --chrome flag, which connects Claude to my browser — it navigates pages, clicks elements, reads console output, and takes screenshots using my actual session.

Plan Mode Is the Real Superpower

Most bad outputs aren’t a model failure. They’re a communication failure. I assumed the agent understood the context. It didn’t.

In psychology this is called theory of mind — the ability to understand that someone else’s mental state differs from yours. Working with an agent is working with a colleague: does she have the same understanding as me? Plan mode forces that check. So does asking the model to interview me before it starts.

I use plan mode in two ways:

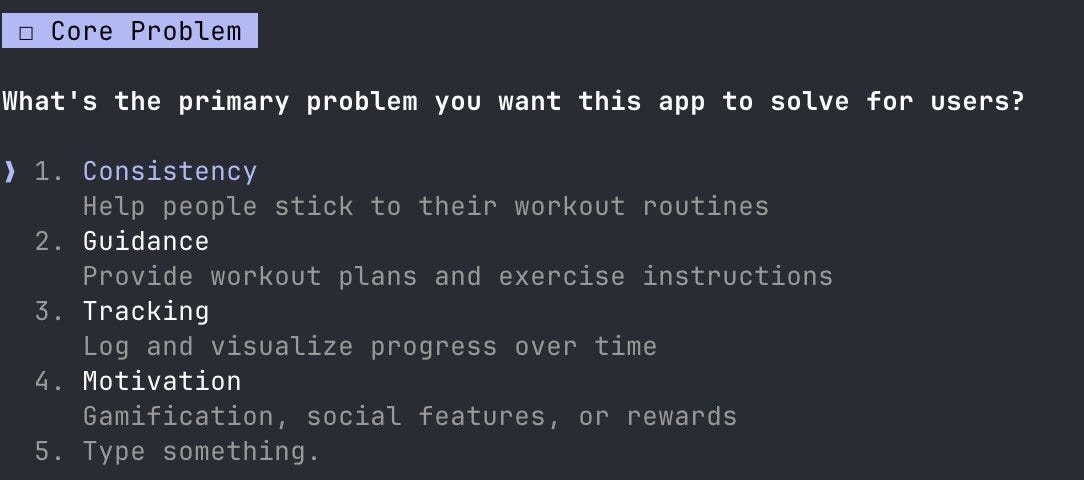

Plan mode for simple or non-technical work. Press Shift+Tab twice, or start with

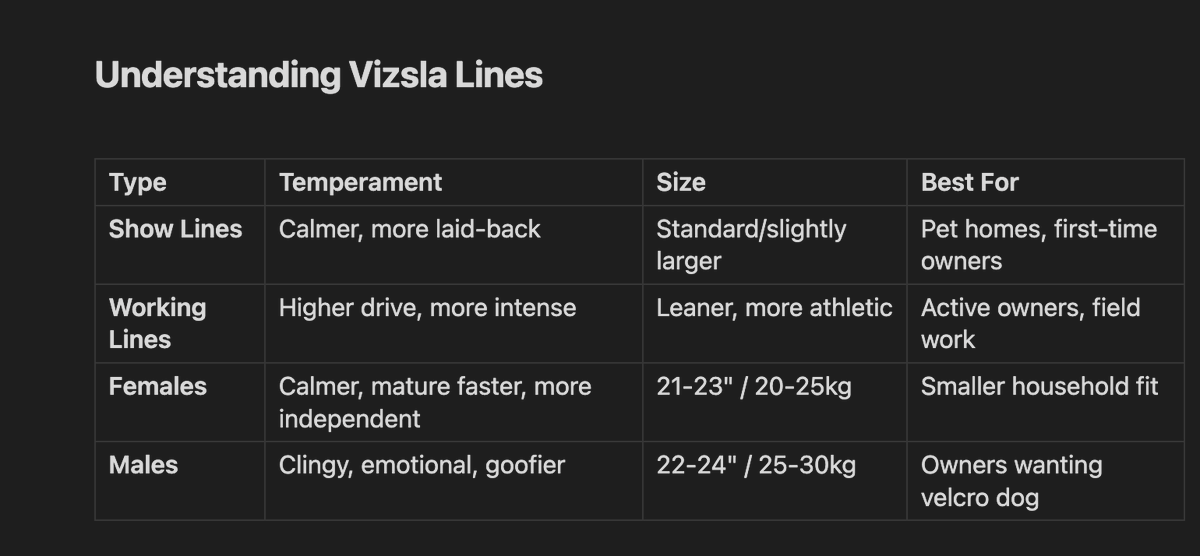

claude --permission-mode plan. Claude analyses the problem, proposes an approach, I approve before anything is written. I used this to research Hungarian Vizsla breeders in the UK — I asked for comparison tables, pricing guides, and a red flags checklist. But because I was in plan mode, Claude asked: what's my budget? Hunting line or show line? Registered breeders only? When do I want the puppy? The output was better because the model understood the problem before solving it. This works beyond code — research, YouTube strategy, anything where the approach matters.

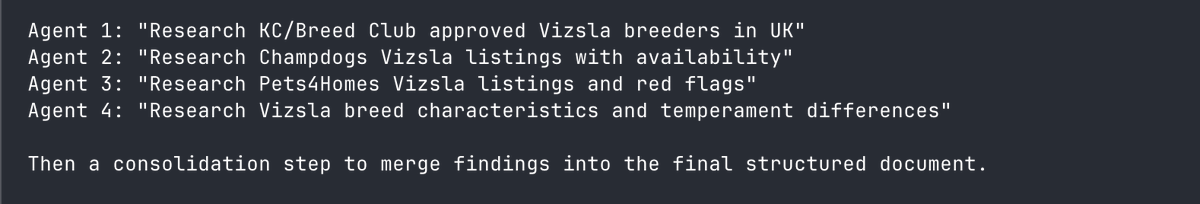

Compound plan mode for robust features that need depth.

/compound-engineering:workflows:planspawns multiple specialised agents that review the plan from different angles: architecture, performance, security, pattern consistency. Each reviewer returns findings. Claude synthesises them into a single implementation strategy. The difference between asking one colleague for feedback and running a design review with specialists.

Standard plan for everyday work. Compound for anything that can go wrong in non-obvious ways

Skills over agents.

Skills are crystallised preferences. The things I’ve learned about how I like to work, encoded once so I never have to explain them again. I’m teaching an assistant how I think.

A skill is a markdown file in ~/.claude/skills/. It has a name, a description, trigger conditions, and instructions. I ask Claude to create a skill for X and it generates the file. Same structure every time.

At Stripe, I built a skill called /qa. Depending on the task type — quick test, standard, or end-to-end — the skill follows an exact path. Quick runs 3 core scenarios. Standard runs 6. Exhaustive runs all 10. Each run creates a report with a request ID and a Postman collection. Deterministic testing from a single command.

I have a weekly planning skill that reads my calendar, projects, and meetings. Monday morning, one command, full picture.

A /prd skill reviews PRDs against what I think a good PRD looks like — structure, clarity, edge cases, dependencies. Instead of explaining my review style every time, I wrote it down once.

/zinsser strips AI writing tells: the rule of three, excessive em dashes, words like “delve” and “tapestry”, and cuts unnecessary words. /article encodes my entire writing workflow: folder structure, research phase, rhythm, sign-off. Claude follows it automatically.

Every skill is an agent. The line between them is fuzzier than it looks. But skills are bounded, predictable, composable.

Rule of thumb: if I’ve hit the same friction three times, I create a skill.

If you want me to open-source these, I’m happy to share them.

What I’ve Actually Built

Too many guides get shared without showing what they produced. Here's a sample of what this setup made

My partner and I were debating whether to buy or rent. So I built a Rent vs Buy calculator with UK-specific tax treatment, leasehold considerations, and investment opportunity cost modelling. We used it to make an actual decision.

My sister runs coaching workshops and has to administer different psychometric tests across her clients. So I built a single test that compressed 26 instruments from 600+ items down to 168 — a 72% reduction while preserving every subscale dimension. One test instead of twenty-six.

Our cleaner speaks Brazilian Portuguese, and I wanted to experiment with new open-source models. So I built real-time speech translation using Cartesia and TranslateGemma, under 250ms latency. I could add local accents into the mix.

At work: a money movement QA agent, a product legal agent, a policy review agent, etc.

Don’t Automate Yet

Get comfortable with the prompted cycle first. Too many people automate problems they haven’t worked through with the model. They build agent workflows for edge cases they’ve never prompted their way past. When something breaks, they have no intuition for where the failure is. Was it the prompt? The context? The tool call? The orchestration? I want that intuition. The only way to get it is to sit in the loop — prompting manually, watching how the model behaves — before handing anything off to an agent.

Since February I’ve built custom MCPs. A treasury testing server. A policy review server. A documentation server that works around authentication constraints. Each one came from repeated friction. I knew what to automate because I’d felt the pain first.

Frontend design is the gap I’m closing now. I want to spend real time with tools like paper.design, controlling the experience rather than accepting whatever the model gives me.

The people who ship are the ones who stopped configuring and started building. A good setup doesn’t announce itself. It disappears the moment you have something to make.

– yubi